Artificial Intelligence Development

A Complete Guide for Innovators

Artificial intelligence has been revolutionizing our lives for decades, but it’s officially become a part of everyday life. In our latest guide for innovators, we’re taking a clear-eyed look at what’s real and what isn’t to help you better understand AI and decide if it’s the right choice for your business.

Artificial intelligence. Machine learning. Deep learning. With so many different terms being used interchangeably, finding the time to separate AI signal from noise is difficult, and so is determining what’s critical knowledge for your next business or project.

Wanna chat about integrating AI into your business? Talk to us.

What is AI and what does it do?

Artificial intelligence (AI) simply refers to intelligent machines and programs. By using algorithms (a set of rules for solving problems), these smart devices and software craft their own solutions rather than spitting out predefined answers.

With AI, it’s by design that your open-ended interactions have open-ended results. If you’re playing chess against AI, take the same move in two different games and, just like a person, you might get two different results from your digital opponent. However, an AI-less chess program only executes predefined moves, and its skills never improve.

What are the different types of AI?

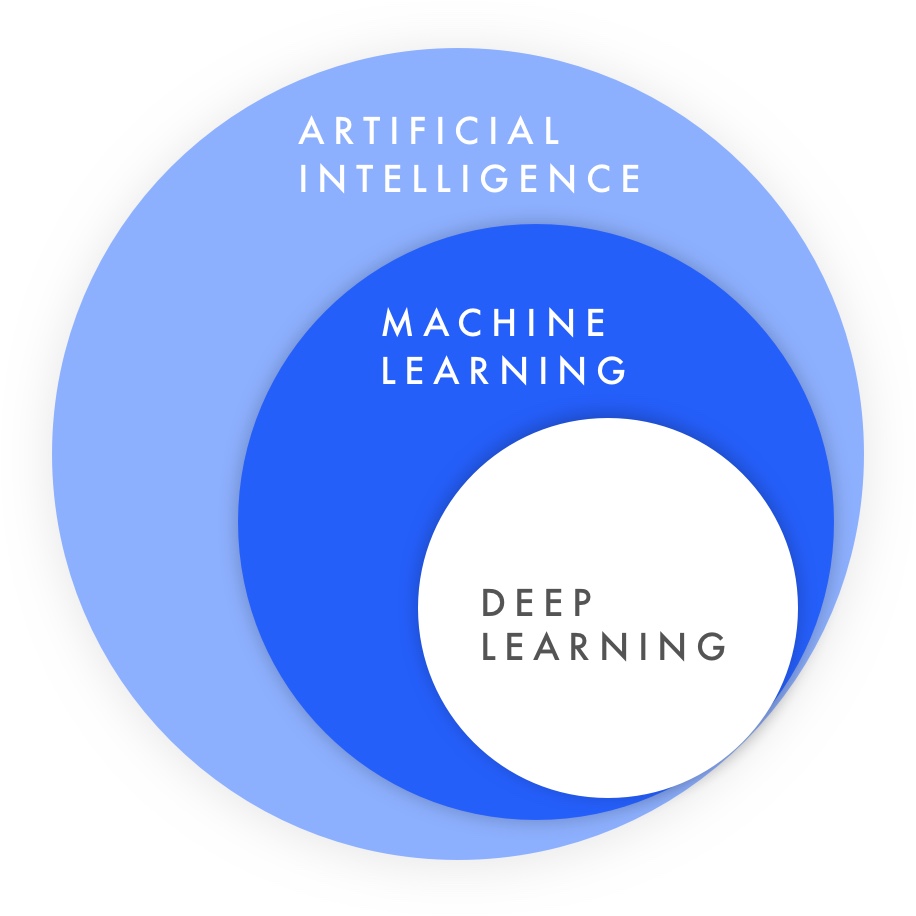

AI is a broad umbrella term, but inside this category, there are several kinds of artificial intelligence.

Machine learning (ML) and AI are often used interchangeably, but they’re different. AI is a broad, general term for intelligent machines. ML is specific — it refers to machines and programs which independently learn and evolve from the data they’re given. Think of AI as the overall field and ML as a specific discipline within it.

Importantly, these machines are using various mathematical models for best-guess predictions, and they’re very good at what they do. An image-recognition algorithm doesn’t implicitly know it’s given a picture of food. It’s trained on pictures of food until it “learns” with very high success rates what food looks like,

ML is also at AI’s forefront. While ML has been part of computer science since well before the 1950s, it wasn’t until the 2010s that adoption substantially increased. In fact, you’re probably using ML without even realizing it.

Want to learn if you’re already using AI? Let’s find out!

Some specific examples include:

- Smartphone predictive text: Phones learn how we construct sentences based upon sentence structure and vocab, then adapt over time with typing habits.

- Smart email responses: Use Google Inbox? You’ve probably tried “Smart Reply”. These quick, contextual email responses predict what’s relevant and get smarter based on your emails.

- Commute time prediction: Just like you know from experience that the 5 PM Wednesday commute is terrible, Google Maps uses its “experience” (anonymous map location data) to recommend the fastest route.

Many companies besides Google are using machine learning, too:

- Yelp: Image recognition helps business owners sort though customers’ photos by automatically organizing them under categories like “food”, “indoor” and “outdoor”.

- Etsy: Etsy acquired Blackbird Technologies to add search engine machine learning. Since then, their site has added more flexible keyword- and tag-oriented search.

- Uganda Wildlife Authority: Wildlife conservation might be a surprising scenario, but a University of Southern California team created PAWS, AI predicting poaching routes.

Deep Learning

If machine learning is a subset of AI, then deep learning (DL) is an even more bleeding edge subset of this subset. Deep learning, along with new data coming in from social media, device sensors and other sources, is what pushed ML forward in the 2010s.

DL solves problems nonlinearly instead of top to bottom, and neural networks are one of the most powerful and common DL solutions.

In these models, artificial “neurons” (really just mathematical functions) are part of a connected and layered system which accepts inputs according to a mathematical function, passes them to adjacent “neurons” along weighted paths and outputs a value.

For example, an image of an outdoor picnic might be cut into smaller images and passed into a neural network for recognition. Each neuron looks at its assigned image, gives it a probability that it looks like “inside”, “outside” or “food”, then passes the image and probability on to the next connected neuron along a weighted pathway.

All the photo pieces and probabilities get passed through the neural network’s layers in a massive, parallel firing network of activity until some final probabilities emerge. Perhaps a neural network would decide it has a 75% chance of being outside, 5% inside, and 20% food. So, it tags the image as “outside,” then moves on to the next one.

Neural networks can be challenging to understand, but they’re very loosely inspired by our brains, at least in the sense that they’re a dense network of parallel, computational “nodes”.

Ultimately what matters most is the result: an extremely fast and powerful kind of deep learning which adapts well to many scenarios.

Even though this sounds like science fiction, you’re already using neural networks daily, including:

- Facial recognition: People would struggle to recognize similar faces in over 250 billion (and counting!) photos and accurately pinpoint them back to a Facebook profile, but that’s exactly what Facebook does.

- Speech-to-text and voice search: Speech-to-text was terrible for years. Now it’s everywhere, made possible by constantly-improving ML and neural networks. In an Oct 2016 white paper, Microsoft researchers said they’ve even developed tech to surpass human transcriptionists.

- Siri and digital assistants: Anytime you say “Hey Siri” in iOS11, you’re using a “deep neural network”. It samples audio in small snippets and calculates the ongoing likelihood you were summoning your digital assistant.

To quickly sum up the differences between AI, ML and DL:

- All deep learning is machine learning and artificial intelligence.

All machine learning is artificial intelligence.

But all artificial intelligence isn’t machine learning or deep learning.

How do you tell the difference between generalized ML and DL? Usually, it’s the kind of problem being solved. DL does very well with one-to-many (or “high cardinality”) relationships. In image recognition, for example, one unique person might appear in many photos (hundreds, thousands or more). In speech recognition it’s the same, but for one vowel sound and many words.

How do machines learn, anyway?

This topic deserves its own article, but we’ll summarize the three main ways machines are taught:

- Unsupervised

- Semi-supervised

- Supervised

(There’s a fourth method called reinforcement learning, but we’ll skip it for now.)

These methods might sound like a computer scientist sits in a classroom somewhere, peering over a robot’s shoulder. In reality, it’s just about how datasets are labeled.

Let’s say you’re trying to identify emotions in Tweets, known as sentiment analysis.

In supervised learning, every Tweet is hand-labeled with your desired category: happy, sad or neutral.

- Pro: The AI learns exactly what categories you expect.

- Con: Finding or labeling large datasets is difficult.

On the other end of the spectrum, unsupervised learning is completely unlabeled. In unsupervised learning, AI finds groupings itself using various mathematical models.

- Pro: No labeled training data is necessary.

- Con: Your AI may not find the patterns you’re looking for, like “happy”, “sad” and “neutral”. This could be a good or bad depending on whether you’re looking for unique insights or not. Machines don’t “think” the same as people, so this is a risk.

A best-of-both-worlds compromise is semi-supervised learning. This combines small labeled datasets with a larger unlabeled dataset.

- Pro: Avoids the major pitfalls of both supervised and unsupervised learning.

- Con: Some methods are time-consuming to set up (among other limitations).

Why are so many companies using AI?

AI is technologically powerful because it can accomplish many traditionally-human processes without fatigue, emotions or logical fallacies. If it’s trained on good data, it’s also unbiased.

Business-wise, AI presents a massive market opportunity. In two reports citing McKinsey, Bank of America Merrill Lynch predicted that by 2020 there will be a $153 billion AI and robot market, with $70 billion of this in “AI-based analytics”.

They predicted by 2025 there will be:

- A 40-50% productivity increase from AI-based solutions for 290 million knowledge workers globally

- $5.2-6.7 trillion increase in annual, worldwide revenue

- 30% additional productivity in many industries

- 18-33% reduced manufacturing labor costs

Beyond the global economic and labor advantages, AI offers benefits like:

- Increased accuracy, speed and quality

- Easily accomplishing human-intelligence tasks at scale

- Recognizing unique insights and patterns

- Freeing people up to spend their time on non-repetitive tasks

Of course, AI isn’t without its concerns, including:

- Biased or low-quality data

- Ethics

- Safety

- Cybersecurity

- And how all this impacts society long-term

The Limitations of AI

Are we all going to be replaced by AI and sentient machines? Despite what the more sensational headlines say, no — not anytime soon, at least.

Sometimes this is because we prefer human interactions to robot and computer ones. In many other cases, human capabilities aren’t yet replaceable.

And, often it’s simply that human-AI partnerships are more powerful than AI (or people) working solo. Understanding this helps you evaluate AI’s business applications for yourself.

As computer scientist Andrew Ng writes in the HBR report What AI Can and Can’t Do (paywall), “Almost all of AI’s recent progress is through one type, in which some input data (A) is used to quickly generate some simple response (B).”

That is, AI can take rough digital sketches (input) and create artwork like Picasso (response), but there are still questions about whether AI can truly be creative.

Intelligent software can look at potential solutions (input) and write its own code (response), but it can’t yet write deeply complex solutions on its own.

And AI can learn from emails (input) to write its own short emails (response), but it still can’t write its own novels without human co-authors. Some elements of human creativity and complex thinking remain beyond AI’s reach.

AI is weak, but it’s still powerful

All of today’s AI is considered “weak” or “narrow” AI — it’s designed to find solutions for specific scenarios. There are no sentient thinking machines (“strong AI”) or artificial general intelligence (AGI). If it existed, an AGI could be a jack of all trades and master writing, painting, programming and music composition. But, unlike people, AI can currently only specialize in one thing.

Another theorized kind of AI is artificial superintelligence (ASI). When you watch or read science fiction and malevolent AI takes over the world, this is usually ASI — a machine brain so powerful it far surpasses even the greatest human savants in every way. Needless to say, ASI doesn’t yet exist, and it may never.

This doesn’t mean AI isn’t powerful.

A narrow AI still solves complex problems and frequently beats humans at their own game, including pattern recognition. But it’s highly-focused and purpose-built; that’s important to keep in mind when thinking about business applications.

Additionally, it’s possible to “fake” AI. Not every seemingly-smart program is AI-based. A rudimentary chatbot might seem intelligent, but it could be matching keywords to a huge database. A chess bot might mimic intellect, but perhaps it’s using big list of If/Then cases. Because these are pre-programmed, the system isn’t artificially intelligent. It’s just returning what the programmer told it to.

But fake isn’t automatically bad. Fake AI can be a crucial stepping stone on many AI product roadmaps. They can begin testing the value prop with a scaled-back proof-of-concept, sans AI.

This runs in production, the company gathers usage data and user research, gathers further data that can train the AI, then rolls out their full service later. AI development can be time consuming and costly, so why not test go the Agile route and test a pseudo-AI before throwing money at the real thing?

Want to get started trying out AI? We can help.

How AI Applies Across Seven Industries

AI, ML and DL have many incredible applications across industries. We’ve already talked about some of them above. Here are several more to chew on.

1. Finance

Investment bankers have long used computerized trading algorithms, but their static models don’t adjust well to changing markets. In the machine learning age, adaptive algorithms reduce risk and increase gains. Other ways AI helps include fraud reduction and anti-money laundering.

Who’s doing it?

- “Robo-investing” companies like Wealthfront and Betterment, which already manage billions of dollars each.

- Payment anti-fraud startup Fraugster, whose algorithm adjusts with each new transaction and reduces fraud by up to 70%.

2. Healthcare and medicine

We’ve historically relied on doctors for disease diagnosis. But humans, even doctors, are fallible. Over 250,000 Americans die annually from healthcare mistakes; these errors cost $20.8 billion in 2016. Diagnostic algorithms can change healthcare and save lives.

Beyond this, pharmaceutical drug development is incredibly expensive and time-consuming. Drug development costs double every nine years, and it’s only getting slower and more expensive. With millions of potential chemicals to test, researchers have a huge task.

Who’s doing it?

- Lumiata’s suite of AI-predictive tools for chronic conditions and Human Diagnosis Project, which connects doctors to specialists for puzzling diagnoses.

- Stanford University’s deep learning uses “one-shot” to help researchers identify the best drug development chemicals.

- IBM’s latest models which can discover new disease treatments.

3. Security

Machine learning algorithms are faster and better than people at recognizing faces — even privacy-blurred or pixelated ones. Thus, current security solutions like passwords, ID cards, RFID and more may soon be replaced with facial biometrics. Combined with blockchain, it’s even more revolutionary.

Other areas where innovation through AI excels are malware and spam detection. Forget basic rules databases; ML is adaptive and better identifies quickly-changing threats. And with cyberattacks actually powered by AI as a serious future possibility, AI will soon be fighting AI to protect our identities and bank accounts.

Who’s doing it?

- Apple is an obvious choice because of iOS11’s new lightning-fast Face ID.

- Facebook is helping users identify fraudulent accounts by alerting them when their face is recognized as someone else’s profile photo.

- Cambridge University and ex-British spies (!) have created Darktrace, software using ML instead of a hard-coded catalog to detect network abnormalities.

4. E-Commerce

Immediately featuring the thought-leader here just makes sense. Amazon’s product recommendations are admired because they personalize recommendations for every visitor using an algorithm called “collaborative filtering”. By combining this with ML, they learn people’s “true” preferences and prevent incompatible product suggestions.

The results are incredibly effective: 35% of sales come from recommendations and conversion is up to 60%. To learn more, Rejoiner has an excellent overview of Amazon’s site-wide recommendations.

Who else is doing it?

- Netflix recommendations, which divide viewers into thousands of “taste groups”.

- Spotify combines three different recommendation models (including collaborative filtering) to create its amazing “Discover Weekly” song suggestions.

5. Marketing

Email marketing remains one of the best customer outreach methods. Apply machine learning and it’s even more powerful: think automatically-generated, audience-optimized subject lines, email copy and calls-to-action; optimized send times; even personalized images and product recommendations. In the past, these were calculated (or guessed) by people, even entire email marketing departments. In the future, machines will do them.

Other possibilities include personalization and targeting ads, or even analyzing how people on social media feel about your brand.

Who’s doing it?

- Phrasee identifies the most effective email copy. Their subject lines outperform humans 95% of the time.

- Touchstone virtually simulates the email efficacy for different email content instead of sending any actual emails.

- Adobe Campaigns uses “Sensei AI” to personalize email images.

6. Design & user research

What do future creatives do in an AI world? Designers might be “curators, not creators”. They’d make abstracted interfaces, while AI would generate variations to speed up design iterations.

For user research, designer and strategist Ruth Kikin-Gil writes that AI might extract user study insights automatically, or perhaps create chatbots to have discussions with abstracted user personas. Sentiment analysis can even measure feelings during user-testing.

And AI can already run A/B or multivariate tests to determine effective website or email UI and copy, learning from findings and automatically incorporating results into the next round of testing.

Who’s doing it?

- IBM Watson’s tone analyzer can analyze social media sentiments and customers’ tone on support calls.

- AirBnB’s “Sketching Interfaces” internal tool is practically magic, turning low fidelity, hand-sketched wireframes into coded, high-fidelity wireframes in an instant.

- And here are over 50 additional possibilities in the AI and design space.

7. Business and customer service

Recruiting is one major business application: AI is already helping businesses sift through resumes faster by identifying top candidates, even simulating a new hire’s first day on the job. AI hiring software has many positives, including removing hiring bias through data-based objectivity instead of personal subjectivity.

Not to mention, AI is already being used to write earnings and market reports, and numerous companies have implemented chatbots for customer service, including Facebook.

Who’s doing it?

- Indeed Assessments automates hiring by identifying top candidates with simulated job environments, AI “customers” and various other assessments.

- Textio uses linguistic machine learning to draw in 25% more qualified candidates and 23% more women, while Talent Sonar automatically rewrites job ads for broader reach.

- Press Association automates writing non-investigative articles, press releases and market reports.

- Facebook’s B2C chatbots let businesses provide automated customer support.

Is Artificial Intelligence right for your business?

Looking at the examples above, it’s easy to see how many use cases exist for AI, ML and DL. But it’s just the beginning! Your business could benefit from AI, wanna find out? Click here to get started chatting, or keep on reading for questions you should be asking.

However, AI isn’t a magic bullet and comes with legitimate concerns that should be addressed. We’ve created a list of questions, considerations and tradeoffs to keep in mind when weighing it as a business and project possibility.

First: Will AI solve your business problems?

Pivotal questions address whether your business problems are solved by AI. In particular:

- Automatability

Does my business have targeted or repetitive processes, tasks, scenarios and/or roles which are well-suited to AI? (Remember that AI addresses narrow scenarios, but it’s powerful within those.) - Intelligence

Do my processes require human intelligence or decision making? - Evolution

Do my processes need a solution that organically learns and evolves with new insights?

The most important questions are #2 and #3. If all you need is automation, there are plenty of non-AI software solutions.

However, if you need a solution that’s both intelligent and capable of learning, chances are good you would benefit from AI (ML, to be exact.)

Second: What solution do you need?

Once you’ve determined whether AI is appropriate for your business, you should consider what kind of solution you want:

Role

- Do I want my solution to collaborate with people and make them better at their existing jobs? Or will it replace jobs completely?

Scalability

- Do I want a solution that works “at scale”? In other words, how much human effort, if any, is being replaced by this solution?

- How much scalability does the system need to have?

Transparency

- How important is it to understand the “why” behind the decisions my AI makes?

Third: Are you well-equipped for implementation?

Finally, as you look at implementation, consider:

Agility

- Can my business Agilely incorporate this new technology?

- How difficult will it be to integrate with my current software tools and infrastructure?

- Will I be able to launch and iterate in small pilots?

Implementation and training

- Do I have access to the training datasets I need?

- Is the data unbiased and clean? If not, how will I get this data or clean it up?

- Do I already have AI, ML or DL experts who can code this?

- If not, how will I be hiring? In-house or an agency?

Safety and security

- Are there any ethical or safety concerns (like self-driving cars)?

- If something goes wrong in production, what will the impact be? How will my business handle that?

- Are there security concerns? If my AI has access to important systems, how do I ensure that it can’t be hacked or compromised by malicious people or other AI?

You don’t have to answer these questions immediately. Nonetheless, keep them in mind as you move towards incorporating AI.

Have questions about if your business is well equipped? Ask us!

Understanding trade-offs

Assisting vs. replacing

One of the above questions asks about AI’s role — specifically, whether it should assist people’s efforts or replace them completely. There are valid reasons and scenarios to do both.

Assisting people

If your problem is creative or abstract, it might make sense to augment people’s abilities. In many cases, people and AI work better together; their intelligences and abilities are complementary.

As we’ve seen, AI can speed up design iterations and shorten the path from sketch to prototype. Think about where your process tedium exists and how that can be alleviated. What aspects of your business would be better with a one-click process? How can AI make you and others better and faster at their jobs?

Replacing people

There are some things that just make sense to completely replace with AI, whether for cost savings, safety or feasibility.

For example:

- It’s cheaper… to use an AI chatbot for common customer service issues.

- It’s safer… to have AI operate dangerous machinery.

- It’s more feasible… to have AI on-call 24/7/365 for IT system issues.

When considering this option, ask:

- What aspects of your business can be completely replaced by AI?

- What are the risk/benefit trade offs?

- Do existing open source or commercial products already exist?

- What’s the impact to customers or employees? If this is a customer-facing experience, is there still value to human interaction even if AI seems more efficient at first?

Transparency vs. ingenious complexity

Another question above asked whether you need to understand the “why” behind your AI’s decisions. Some AI is more transparent than others.

For example, some AI uses structures called decision trees. As data flows into the tree, you can see the path the decision takes: “Is this Tweet-happy, neutral, or sad? If it’s happy, send it down the left branch to the next decision.”

Of course, these can also quickly become complex and slow (and expensive to build). Nonetheless, you could still visualize a large, complex decision tree. You might just need a really big wall.

Some AI is less transparent. For example, you can visualize how simple neural networks work, but once they become more complex it’s not so clear how they’re making decisions. So far, neural networks behave as expected in scenarios like self-driving cars. But if they didn’t for some reason, it might not be possible to “audit” what happened. As complexity grows, transparency sometimes shrinks.

Quantity vs. quality

Many people emphasize large datasets (or “Big Data”) for ML. Yes, having enough data is important, especially for neural networks and deep neural networks. But you don’t always need a huge dataset for a successful AI.

Additionally, quality is often just as, or more, important. In fact, removing bad data and outliers from large datasets is very difficult. And poor quality data makes poor quality AI.

If a person reads a bad source, they learn incorrect information. Intelligent machines are the same. Untaught machines are naive; they don’t know what information is correct or incorrect. If you put garbage in, you get garbage out.

The Future of AI

There are so many exciting AI trends on the horizon, but, as we enter 2018, the ones we find most thrilling address some key AI issues and limitations, including:

- Unsupervised learning improvements: AI which can teach itself is immensely powerful. As unsupervised learning improves, it will be less necessary to have labeled data. Someday, AI may be even able to self-build, self-improve and self-teach.

- AI for smaller datasets: “One-shot learning” is one approach to working with small datasets. In the future, we’ll see more (and better) ways to address small (or poor quality) datasets.

- AI chips in flagship phones: As artificial intelligence (and neural networks, in particular) have become fundamental to the devices in our pockets, manufacturers are adding dedicated “AI chips” which handle AI processing in their entirety. Just like a GPU is a dedicated graphics processing unit, an AI chip just handles AI-related tasks. In theory, this not only offers better performance and battery life, but data security. Look for way more of these in 2018.

A Deep Revolution

At Jakt, we’re incredibly passionate about what’s in store for artificial intelligence, machine learning and deep learning. Too often, this topic is approached with an existentialist dread; we think that’s because people don’t realize the extent to which AI is already an integral part of everyone’s lives.

In the last almost-decade, AI — specifically machine and deep learning — has completely revolutionized almost every industry and product. It’s progressed from something in computer scientists’ books, brains and research to something that’s in practically every pocket and purse.

We’re in the middle of an AI revolution, and it’s not going anywhere. So, whether you’re creating AI for customer service or cancer detection, let us know how our purpose-built solutions and AI experts can help. We can’t wait to see what you build next.

Looking to learn more about emerging technologies?

Check out our innovator guides about Blockchain, and Voice.

0 Comments